Scalable Web Scraping and Data Extraction Solutions

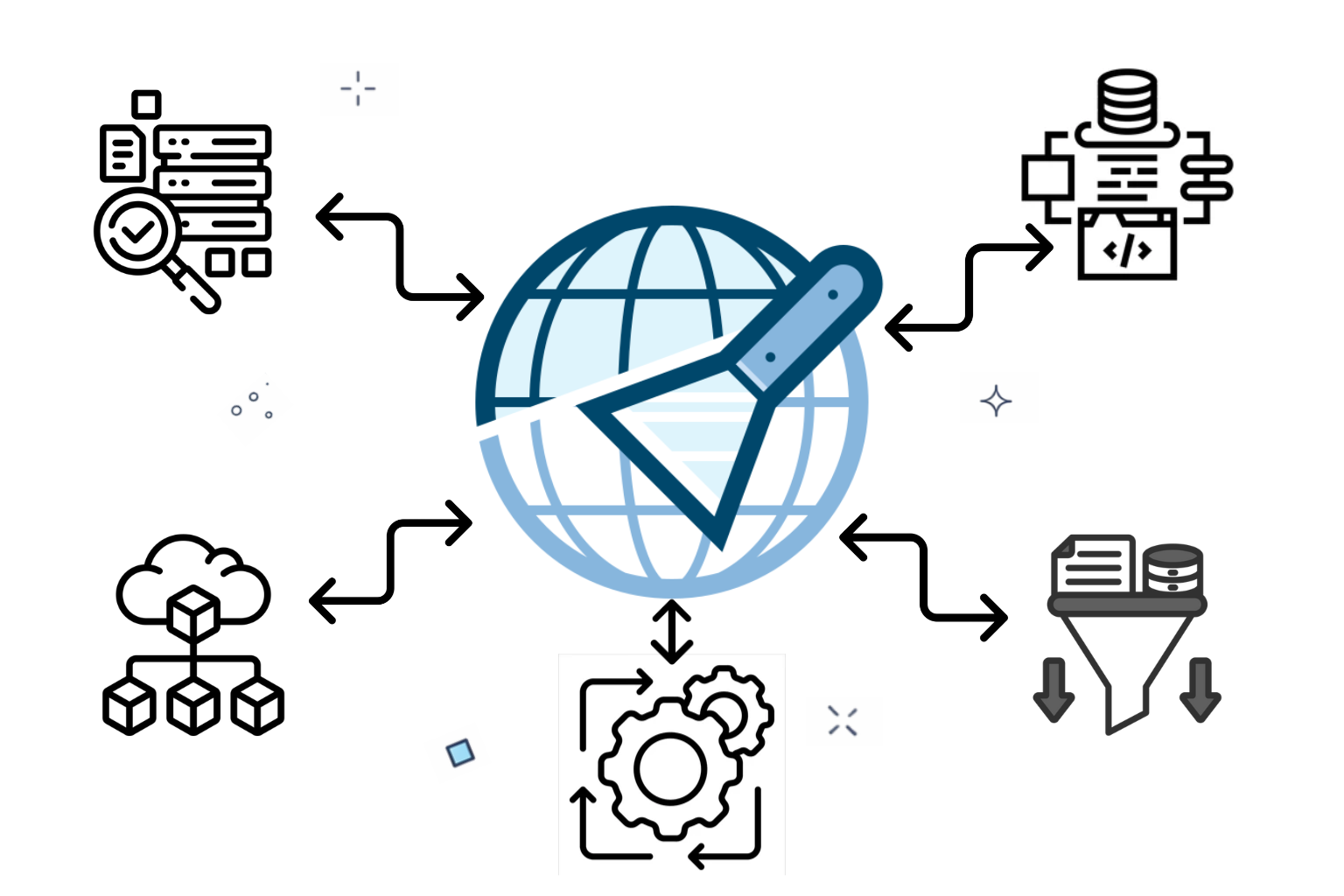

We design scalable web scraping systems that extract structured, reliable data from diverse websites . Our solutions automate data collection, handle dynamic content, and deliver clean, well-organized datasets through APIs or scheduled pipelines.

Business Impact Delivered Through Web Scraping

Web scraping enables faster decisions, reduced manual effort, and continuous access to critical market data.

Reduction in manual data collection costs

Faster access to real-time data

Accuracy across extracted business datasets

Automated data monitoring and updates

Web Scraping Services Built for Scale

Automated data extraction pipelines collect data from complex, dynamic web sources into clean, structured, reliable datasets.

Website Data Extraction

Collect structured information from pages, listings, tables, and dynamic elements.

Dynamic Content Scraping

Handle JavaScript-rendered sites using headless browsers and smart waiting strategies.

Anti Bot Handling

Bypass blocks with rotating proxies, headers, throttling, and fingerprint management.

Scheduled Data Crawling

Run automated crawlers on schedules for updates and change detection.

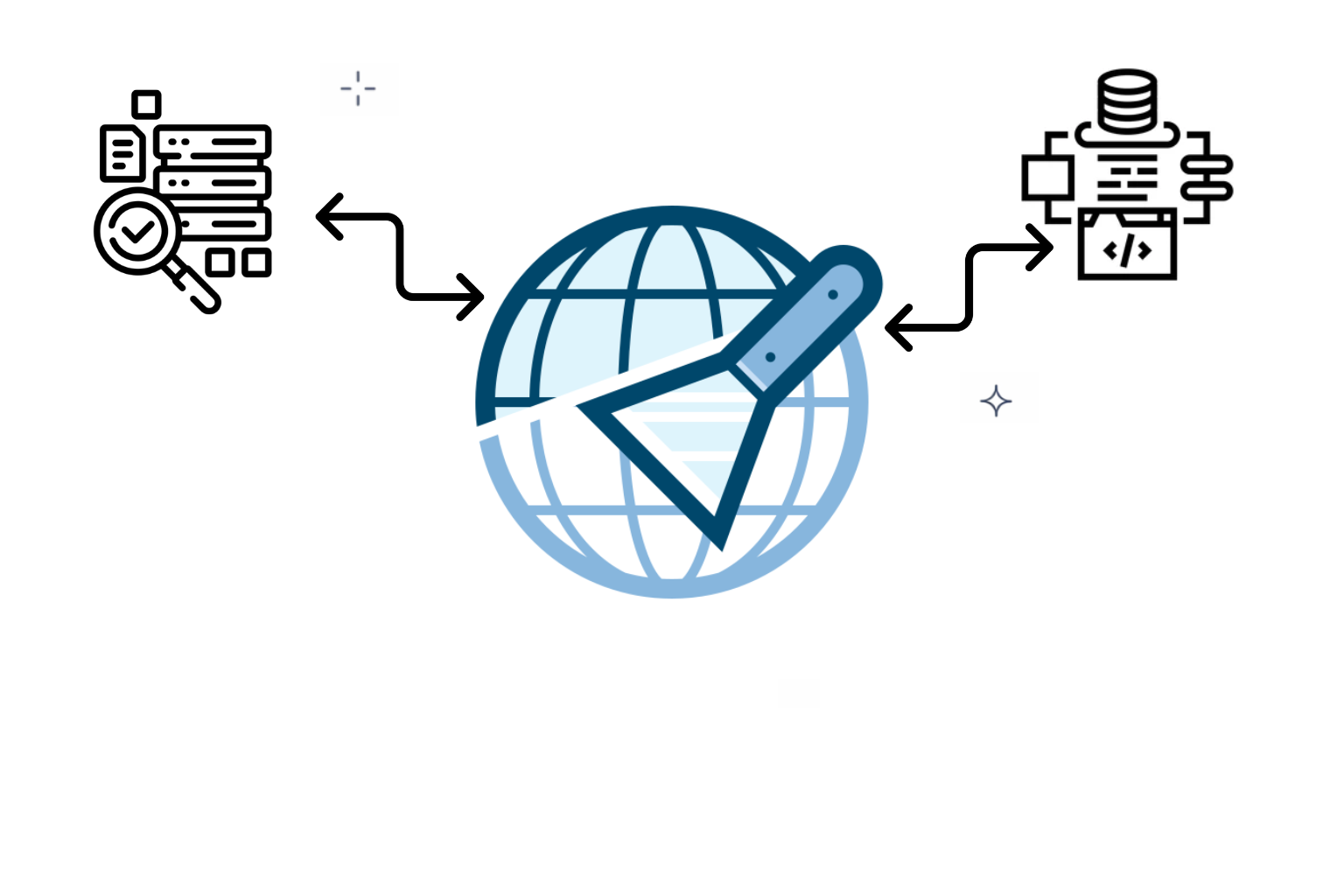

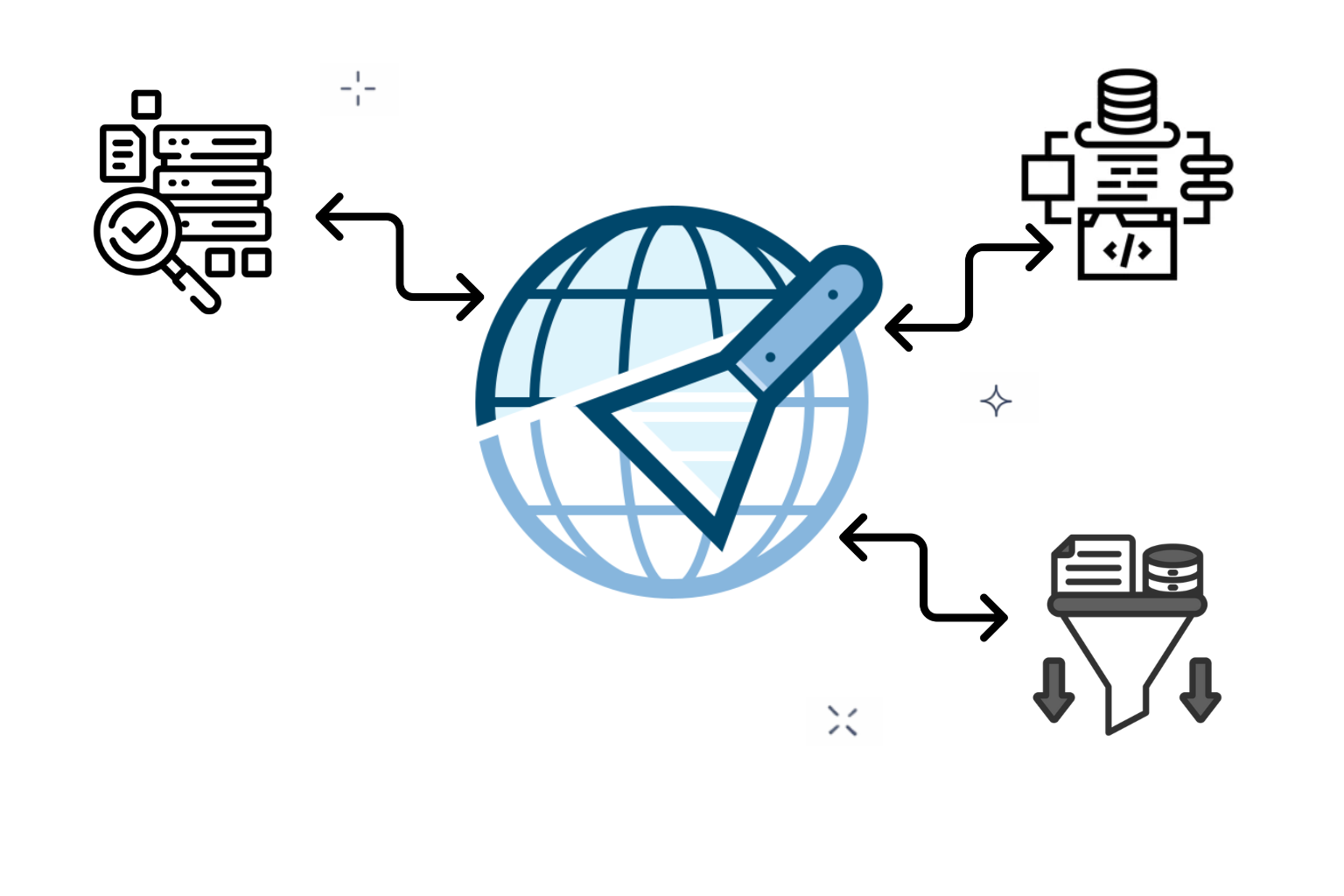

API Data Delivery

Expose scraped data via secure APIs or push pipelines downstream.

Custom Scraping Scripts

Python-based scripts tailored to unique sites, logic, volumes, and formats.

Data Cleaning Processing

Normalize, deduplicate, validate, and enrich datasets before storage or analytics.

Need Reliable Web Data Consistently Without Manual Effort

Book Scraping DemoTechnologies Powering Our Web Scraping

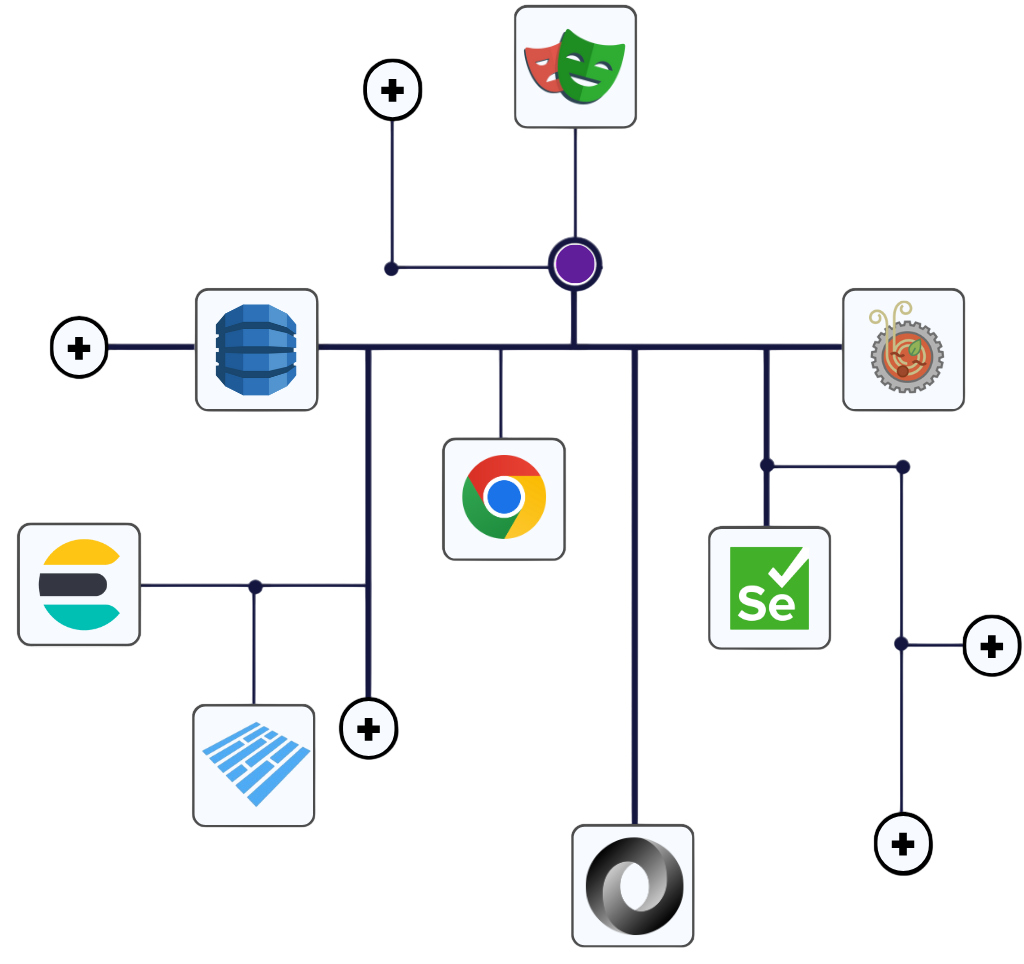

Proven scraping and automation technologies engineered to collect, process, and deliver high-quality web data reliably.

Python

Node.js

TypeScript

Go

Python

Node.js

TypeScript

Go

Tesseract OCR

Playwright

Puppeteer

Selenium

Scrapy

BeautifulSoup

Requests

Cheerio

AWS

Apache Airflow

Celery

Cron

PostgreSQL

MongoDB

REST APIs

Webhooks

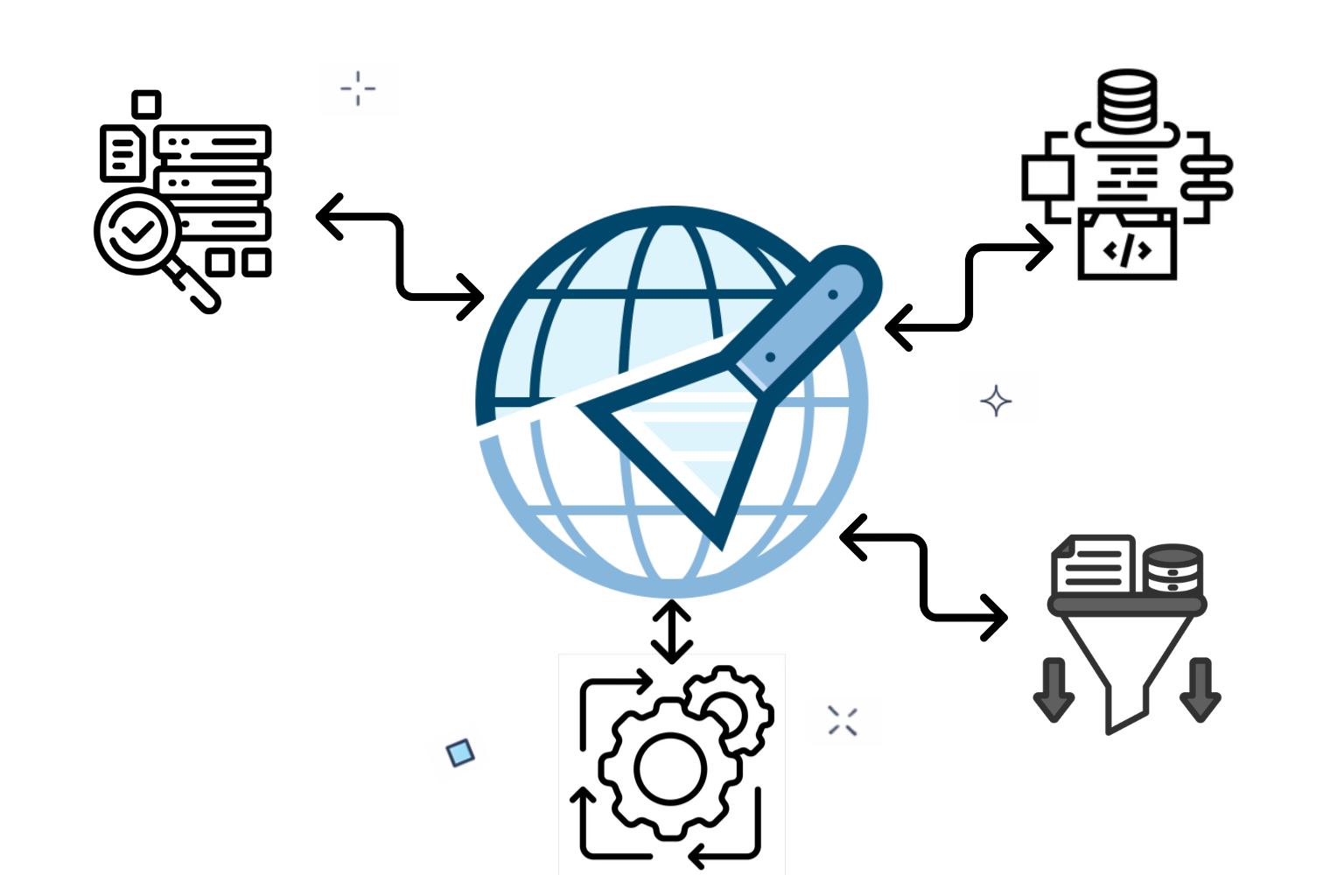

How Our Scraper Development Process Works

Dataspan Technologies delivers reliable web data through a structured scraping workflow focused on accuracy, scalability, and compliance.

Define Scope and Targets

Analyze target websites, data fields, update frequency, legal considerations, and complexity to define precise scraping scope, success metrics, and long-term maintenance requirements early.

- Identify sources structures constraints before development starts

- Assess risks compliance limits access patterns early

- Align goals timelines stakeholders expectations clearly together

Engineer Reliable Scraping Architecture

Architect scraping scripts, crawling logic, proxy rotation, throttling, and error handling to maximize reliability, performance, and adaptability across diverse websites and platform changes.

- Select tools frameworks libraries matching scraping requirements

- Design retries delays rotation preventing blocks effectively

- Plan modular scripts for future enhancements easily

Collect Clean Structured Data

Execute robust scrapers to collect structured and unstructured data from static and dynamic pages accurately at required volumes without loss or duplication issues.

- Handle javascript pagination sessions and authentication seamlessly

- Maintain speed accuracy under high loads consistently

- Log failures retries outcomes for visibility tracking

Enable Continuous Data Pipelines

Schedule scraping jobs, monitor changes, trigger alerts, and orchestrate workflows ensuring continuous data freshness with minimal manual intervention through automated pipelines and controls.

- Automate schedules updates alerts without supervision needed

- Detect site changes and adapt scripts quickly

- Ensure uninterrupted data delivery operations across systems

Expand Volume Without Failure

Optimize performance, expand coverage, and support growing data volumes while maintaining stability, compliance, and long-term operational efficiency for enterprise grade scraping programs globally.

- Scale infrastructure proxies workers as demand grows

- Continuously optimize scripts for performance and cost

- Provide ongoing maintenance monitoring improvements and support

Engineered for Scraping.

Designed for Scale.

Specialized tools enabling resilient scraping, adaptive scripting, and scalable data pipelines across complex web environments.

Build Your Scarper Solution

Live Web Scraper Demo

Experience how our AI-powered engines transform unstructured web content into clean, business-ready data in seconds.

No data generated yet.

Click "Start Scraper" to begin

extraction.

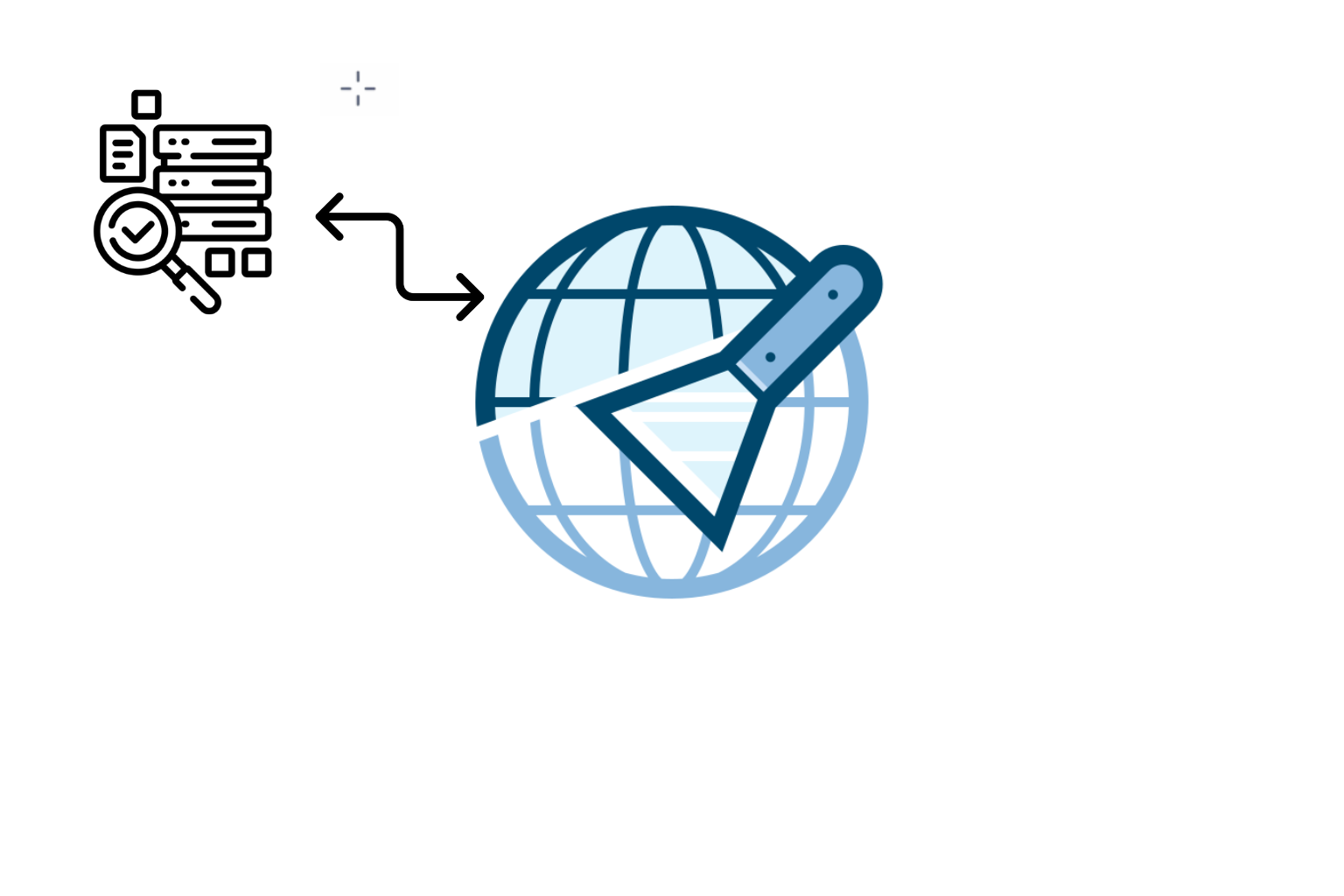

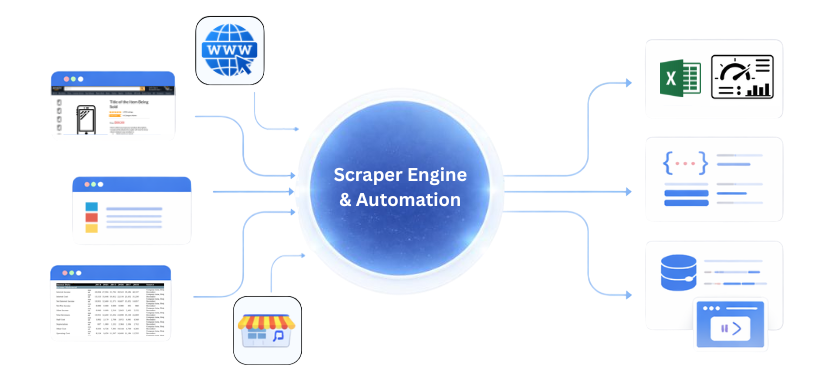

From Scripts to Scalable Data

Extract, process, and deploy data scraped from multiple websites using automated scripts that deliver structured outputs into business-ready systems.

Turn Web Data Into Competitive Advantage

Automate data collection from multiple websites, reduce manual effort, and gain reliable insights with scalable scraping solutions.